This question exists because it has historical significance, butit is not considered a good, on-topic question for this site, so please do not use it as evidence that you can ask similar questions here. This question and its answers are frozen and cannot be changed. More info:help center.

Following are the tweets, the originals and how the tweet is decoded

Downsize the image preserving the aspect ratio (if the image is color, the chroma is sampled at 1/3 the width & height of the luminance)

I then calculate the distribution of the 6 different letters within the string and select the most optimized binary tree for encoding based on the letter frequencies. There are 15 possible binary trees.

. Perhaps not practical, but an interesting problem to think about.

U+007F (127) and U+0080 (128) are control characters. I would suggest banning those as well.

This site uses cookies to deliver our services and to show you relevant ads and job listings. By using our site, you acknowledge that you have read and understand ourCookie PolicyPrivacy Policy, and ourTerms of Service. Your use of Stack Overflows Products and Services, including the Stack Overflow Network, is subject to these policies and terms.

These look incredibly good considering how much unutilized storage space you have (Mona Lisa uses only 606 bits from 920 available!).

Stack Exchange network consists of 173 Q&A communities includingStack Overflow, the largest, most trusted online community for developers to learn, share their knowledge, and build their careers.

. But wait, theres more! The partitioning of these

Fixed, by assuming that the original aspect ratio is somewhere between 1:21 and 21:1, which I think is a reasonable assumption.

Apply median prediction to the image, making the sample distribution more uniform

Learn more about Stack Overflow the company

Learn more about hiring developers or posting ads with us

Mona Lisa(13×20 luminance, 4×6 chroma)

Your submission should include the resulting images after decompression, along with the Twitter comment generated. If possible, you could also give a link to the source code.

from __future__ import division class Point: def __init__(self, x, y): self.x = x self.y = y self.xy = (x, y) def __eq__(self, other): return self.x == other.x and self.y == other.y def __lt__(self, other): return self.y other.y or (self.y == other.y and self.x other.x) def inv_slope(self, other): return (other.x – self.x)/(self.y – other.y) def midpoint(self, other): return Point((self.x + other.x)/2, (self.y + other.y)/2) def dist2(self, other): dx = self.x – other.x dy = self.y – other.y return dx*dx + dy*dy def bisected_poly(self, other, resize_width, resize_height): midpoint = self.midpoint(other) points = [] if self.y == other.y: points += (midpoint.x, 0), (midpoint.x, resize_height) if self.x midpoint.x: points += (0, resize_height), (0, 0) else: points += (resize_width, resize_height), (resize_width, 0) return points elif self.x == other.x: points += (0, midpoint.y), (resize_width, midpoint.y) if self.y midpoint.y: points += (resize_width, 0), (0, 0) else: points += (resize_width, resize_height), (0, resize_height) return points slope = v_slope(other) y_intercept = midpoint.y – slope*midpoint.x if self.y midpoint.y: points += ((resize_height – y_intercept)/slope, resize_height), if slope 0: points += (resize_width, slope*resize_width + y_intercept), (resize_width, resize_height) else: points += (0, y_intercept), (0, resize_height) else: points += (-y_intercept/slope, 0), if slope 0: points += (0, y_intercept), (0, 0) else: points += (resize_width, slope*resize_width + y_intercept), (resize_width, 0) return points class Region: def __init__(self, props=): if props: self.greyscale = props[Greyscale] self.area = props[Area] cy, cx = props[Centroid] if self.greyscale: self.centroid = Point(int(cx/11)*11+5, int(cy/11)*11+5) else: self.centroid = Point(int(cx/13)*13+6, int(cy/13)*13+6) self.num_pixels = 0 self.r_total = 0 self.g_total = 0 self.b_total = 0 def __lt__(self, other): return self.centroid other.centroid def add_point(self, rgb): r, g, b = rgb self.r_total += r self.g_total += g self.b_total += b self.num_pixels += 1 if self.greyscale: self.avg_color = int((3.2*self.r_total + 10.7*self.g_total + 1.1*self.b_total)/self.num_pixels + 0.5)*17 else: self.avg_color = ( int(5*self.r_total/self.num_pixels + 0.5)*51, int(5*self.g_total/self.num_pixels + 0.5)*51, int(5*self.b_total/self.num_pixels + 0.5)*51) def to_num(self, raster_width): if self.greyscale: raster_x = int((self.centroid.x – 5)/11) raster_y = int((self.centroid.y – 5)/11) return (raster_y*raster_width + raster_x)*16 + self.avg_color//17 else: r, g, b = self.avg_color r //= 51 g //= 51 b //= 51 raster_x = int((self.centroid.x – 6)/13) raster_y = int((self.centroid.y – 6)/13) return (raster_y*raster_width + raster_x)*216 + r*36 + g*6 + b def from_num(self, num, raster_width, greyscale): self.greyscale = greyscale if greyscale: self.avg_color = num%16*17 num //= 16 raster_x, raster_y = num%raster_width, num//raster_width self.centroid = Point(raster_x*11 + 5, raster_y*11+5) else: rgb = num%216 r, g, b = rgb//36, rgb//6%6, rgb%6 self.avg_color = (r*51, g*51, b*51) num //= 216 raster_x, raster_y = num%raster_width, num//raster_width self.centroid = Point(raster_x*13 + 6, raster_y*13 + 6) def perm2num(perm): num = 0 size = len(perm) for i in range(size): num *= size-i for j in range(i, size): num += perm[j]perm[i] return num def num2perm(num, size): perm = [0]*size for i in range(size-1, -1, -1): perm[i] = int(num%(size-i)) num //= size-i for j in range(i+1, size): perm[j] += perm[j] = perm[i] return perm def permute(arr, perm): size = len(arr) out = [0] * size for i in range(size): val = perm[i] out[i] = arr[val] return out

The resulting permutation is then applied to the original56regions. The original number (and thus the additional14regions) can likewise be extracted by converting the permutation of the56encoded regions into its numerical representation.

n@~c[wFv8mD2LL!g_(~CO&MG+u-jTKXJy/“S@m26CQ=[zejo,gFk0A%i4kE]N ?R~^8!Ki*KM52u,M(his+BxqDCgUul*N9tNb\lfgn@HhX77S@TZfkCO69!

Reduce the bit depth to 4 bits per sample

Using the permutations to encode additional data is rather clever.

Programming Puzzles & Code Golf Meta

Apply adaptive range compression to the image.

object could also be used to store more information. For example, there are

The dimensions of the Mona Lisa are a bit off, due to the way Im storing the aspect ratio. Ill need to use a different system.

Its ugly, but if you see any room for improvements, let me know. I hacked it together as I want along. I LEARNEDA LOTFROM THIS CHALLENGE. Thank you OP for posting it!

After thats done, I create a string with each pixel color represented by the letters [A-F].

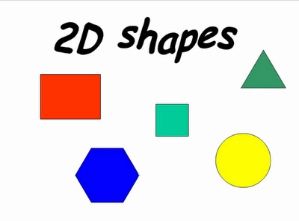

The HindenburgMountainous LandscapeMona Lisa2D Shapes

Anyways, I encode the binary sting into base64 characters. When decoding the string, the process is done in reverse, assigning all the pixels to the appropriate color, and then the image is scaled twice the encoded size (maximum 40 pixels either X or Y, whichever is larger) and then a convolution matrix is applied to the whole thing to smooth out the colors.

package main import ( os image image/color image/png _ image/jpeg math math/big ) // we have 919 bits to play with: floor(log_2(95^140)) // encode_region(r): // 0 // color of region (12 bits, 4 bits each color) // or // 1 // dividing line through region // 2 bits – one of 4 anchor points // 4 bits – one of 16 angles // encode_region(r1) // encode_region(r2) // // start with single region // pick leaf region with most contrast, split it type Region struct points []image.Point anchor int // 0-3 angle int // 0-15 children [2]*Region // mean color of region func (region *Region) meanColor(img image.Image) (float64, float64, float64) red := 0.0 green := 0.0 blue := 0.0 num := 0 for _, p := range region.points r, g, b, _ := img.At(p.X, p.Y).RGBA() red += float64(r) green += float64(g) blue += float64(b) num++ return red/float64(num), green/float64(num), blue/float64(num) // total non-uniformity in regions color func (region *Region) deviation(img image.Image) float64 mr, mg, mb := anColor(img) d := 0.0 for _, p := range region.points r, g, b, _ := img.At(p.X, p.Y).RGBA() fr, fg, fb := float64(r), float64(g), float64(b) d += (fr – mr) * (fr – mr) + (fg – mg) * (fg – mg) + (fb – mb) * (fb – mb) return d // centroid of region func (region *Region) centroid() (float64, float64) cx := 0 cy := 0 num := 0 for _, p := range region.points cx += p.X cy += p.Y num++ return float64(cx)/float64(num), float64(cy)/float64(num) // a few points in (or near) the region. func (region *Region) anchors() [4][2]float64 cx, cy := region.centroid() xweight := [4]int1,1,3,3 yweight := [4]int1,3,1,3 var result [4][2]float64 for i := 0; i 4; i++ dx := 0 dy := 0 numx := 0 numy := 0 for _, p := range region.points if float64(p.X) cx dx += xweight[i] * p.X numx += xweight[i] else dx += (4 – xweight[i]) * p.X numx += 4 – xweight[i] if float64(p.Y) cy dy += yweight[i] * p.Y numy += yweight[i] else dy += (4 – yweight[i]) * p.Y numy += 4 – yweight[i] result[i][0] = float64(dx) / float64(numx) result[i][1] = float64(dy) / float64(numy) return result func (region *Region) split(img image.Image) (*Region, *Region) anchors := region.anchors() // maximize the difference between the average color on the two sides maxdiff := 0.0 var maxa *Region = nil var maxb *Region = nil maxanchor := 0 maxangle := 0 for anchor := 0; anchor 4; anchor++ for angle := 0; angle 16; angle++ sin, cos := math.Sincos(float64(angle) * math.Pi / 16.0) a := new(Region) b := new(Region) for _, p := range region.points dx := float64(p.X) – anchors[anchor][0] dy := float64(p.Y) – anchors[anchor][1] if dx * sin + dy * cos = 0 a.points = append(a.points, p) else b.points = append(b.points, p) if len(a.points) == 0 len(b.points) == 0 continue a_red, a_green, a_blue := a.meanColor(img) b_red, b_green, b_blue := b.meanColor(img) diff := math.Abs(a_red – b_red) + math.Abs(a_green – b_green) + math.Abs(a_blue – b_blue) if diff = maxdiff maxdiff = diff maxa = a maxb = b maxanchor = anchor maxangle = angle region.anchor = maxanchor region.angle = maxangle region.children[0] = maxa region.children[1] = maxb return maxa, maxb // split regions take 7 bits plus their descendents // unsplit regions take 13 bits // so each split saves 13-7=6 bits on the parent region // and costs 2*13 = 26 bits on the children, for a net of 20 bits/split func (region *Region) encode(img image.Image) []int bits := make([]int, 0) if region.children[0] != nil bits = append(bits, 1) d := region.anchor a := region.angle bits = append(bits, d&1, d1&1) bits = append(bits, a&1, a1&1, a2&1, a3&1) bits = append(bits, region.children[0].encode(img)…) bits = append(bits, region.children[1].encode(img)…) else bits = append(bits, 0) r, g, b := region.meanColor(img) kr := int(r/256./16.) kg := int(g/256./16.) kb := int(b/256./16.) bits = append(bits, kr&1, kr1&1, kr2&1, kr3) bits = append(bits, kg&1, kg1&1, kg2&1, kg3) bits = append(bits, kb&1, kb1&1, kb2&1, kb3) return bits func encode(name string) []byte file, _ := os.Open(name) img, _, _ := image.Decode(file) // encoding bit stream bits := make([]int, 0) // start by encoding the bounds bounds := img.Bounds() w := bounds.Max.X – bounds.Min.X for ; w 3; w = 1 bits = append(bits, 1, w & 1) bits = append(bits, 0, w & 1) h := bounds.Max.Y – bounds.Min.Y for ; h 3; h = 1 bits = append(bits, 1, h & 1) bits = append(bits, 0, h & 1) // make new region containing whole image region := new(Region) region.children[0] = nil region.children[1] = nil for y := bounds.Min.Y; y bounds.Max.Y; y++ for x := bounds.Min.X; x bounds.Max.X; x++ region.points = append(region.points, image.Pointx, y) // split the region with the most contrast until were out of bits. regions := make([]*Region, 1) regions[0] = region for bitcnt := len(bits) + 13; bitcnt = 919-20; bitcnt += 20 var best_reg *Region best_dev := -1.0 for _, reg := range regions if reg.children[0] != nil continue dev := reg.deviation(img) if dev best_dev best_reg = reg best_dev = dev a, b := best_reg.split(img) regions = append(regions, a, b) // encode regions bits = append(bits, region.encode(img)…) // convert to tweet n := big.NewInt(0) for i := 0; i len(bits); i++ n.SetBit(n, i, uint(bits[i])) s := make([]byte,0) r := t) for i := 0; i 140; i++ n.DivMod(n, big.NewInt(95), r) s = append(s, byte(r.Int64() + 32)) return s // decodes and fills in region. returns number of bits used. func (region *Region) decode(bits []int, img *image.RGBA) int if bits[0] == 1 anchors := region.anchors() anchor := bits[1] + bits[2]*2 angle := bits[3] + bits[4]*2 + bits[5]*4 + bits[6]*8 sin, cos := math.Sincos(float64(angle) * math.Pi / 16.) a := new(Region) b := new(Region) for _, p := range region.points dx := float64(p.X) – anchors[anchor][0] dy := float64(p.Y) – anchors[anchor][1] if dx * sin + dy * cos = 0 a.points = append(a.points, p) else b.points = append(b.points, p) x := a.decode(bits[7:], img) y := b.decode(bits[7+x:], img) return 7 + x + y r := bits[1] + bits[2]*2 + bits[3]*4 + bits[4]*8 g := bits[5] + bits[6]*2 + bits[7]*4 + bits[8]*8 b := bits[9] + bits[10]*2 + bits[11]*4 + bits[12]*8 c := color.RGBAuint8(r*16+8), uint8(g*16+8), uint8(b*16+8), 255 for _, p := range region.points img.Set(p.X, p.Y, c) return 13 func decode(name string) image.Image file, _ := os.Open(name) length, _ := file.Seek(0, 2) file.Seek(0, 0) tweet := make([]byte, length) file.Read(tweet) // convert to bit string n := big.NewInt(0) m := big.NewInt(1) for _, c := range tweet v := big.NewInt(int64(c – 32)) v.Mul(v, m) n.Add(n, v) m.Mul(m, big.NewInt(95)) bits := make([]int, 0) for ; n.Sign() != 0; bits = append(bits, int(n.Int64() & 1)) n.Rsh(n, 1) for ; len(bits) 919; bits = append(bits, 0) // extract width and height w := 0 k := 1 for ; bits[0] == 1; w += k * bits[1] k = 1 bits = bits[2:] w += k * (2 + bits[1]) bits = bits[2:] h := 0 k = 1 for ; bits[0] == 1; h += k * bits[1] k = 1 bits = bits[2:] h += k * (2 + bits[1]) bits = bits[2:] // make new region containing whole image region := new(Region) region.children[0] = nil region.children[1] = nil for y := 0; y h; y++ for x := 0; x w; x++ region.points = append(region.points, image.Pointx, y) // new image img := image.NewRGBA(image.Rectangleimage.Point0, 0, image.Pointw, h) // decode regions region.decode(bits, img) return img func main() if os.Args[1] == encode s := encode(os.Args[2]) file, _ := os.Create(os.Args[3]) file.Write(s) file.Close() if os.Args[1] == decode img := decode(os.Args[2]) file, _ := os.Create(os.Args[3]) png.Encode(file, img) file.Close()

Next I recolor each pixel of the new image to its closest match on a 6 color greyscale palette.

Thats nothing short of amazing

Detailed answers to any questions you might have

When decoding, each of these regions is treated as a 3D cone extended into infinity, with its vertex at the centroid of the region, as viewed from above (a.k.a. a Voronoi Diagram). The borders are then blended together to create the final product.

GmLB&ep^m40dPs%V[4&~F[Yt-sNceB6LCs/bv`\4TB_P Rr7Pjdk7*2=gssBkR$![ROG6XsAEtnP=OWDP6&h^l+LbLr4%R15ZcD?J6E.(W*?d9wdJ

Really really awesome. Can you make a gist with this 3

Doesnt Twitter allow Unicode to some extent?

Encoded with–ratio 60, and decoded with–no-blendingoptions.

What follows is the stream of bits representing the image. Each pixel and its color is represented by either 2 or 3 bits. This allows me to store at least 2 and up to 3 pixels worth of information for every printed ascii character. Heres a sample of binary tree1110, which is used by the Mona Lisa:

You must write a program (possibly the same program) that can take the encoded text and output a decoded version of the photograph.

The second one encoded with the–greyscaleoption.

I load the source image and resize it to a maximum height or width (depending on orientation, portrait/landscape) of 20 pixels.

objective primary winning criterion

I start my bit stream with a single bit,[10]depending on whether the image is tall or wide. I then use the next 4 bits in the stream to inform the decoder which binary tree should be used to decode the image.

The letters E00and F10are the most common colors in the Mona Lisa. A010, B011, C110, and D111are the least frequent.

2d Shapes would probably look better with blending turned off. Ill likely add a flag for that.

See if the size of the compressed image is = 112

The Hindenburg could be improved a lot. The color palette Im using only has 6 shades of grey. If I introduced a greyscale-only mode, I could use the extra information to increase the color depth, number of regions, number of raster points, or any combination of the three.

The Hindenburg picture looks pretty crappy, but the others I like.

The text created by the program must be at most 140 characters long and must only contain characters whose code points are in the range of 32-126, inclusive.

If this question can be reworded to fit the rules in thehelp center, pleaseedit the question.

option to the encoder, which does all three.

Start here for a quick overview of the site

You must write a program that can take an image and output the encoded text.

and split the image into palette blocks (in this case, 2×2 pixels) of a front and back color.

from my_geom import * this can be any value from 0 to 56!, and it will map unambiguously to a permutation num = 9 perm = num2perm(num, 56) print perm print perm2num(perm)

If youre attentive, you may have noticed that140 / 2.5 = 56regions, and not70as I stated earlier. Notice, however, that each of these regions is a unique, comparable object, which may be listed in any order. Because of this, we can use thepermutationof the first56regions to encode for the other14, as well as having a few bits left over to store the aspect ratio.

I know the colours are wrong, but I actually like the Monalisa. If I removed the blur (which wouldnt be too hard), its a reasonable cubist impression :p

Your program can use external libraries and files, but cannot require an internet connection or a connection to other computers.

Ive improved my method by adding actual compression. It now operates by iteratively doing the following:

@rubik it is incredibly lossy, as are all of the solutions to this challenge 😉

Discuss the workings and policies of this site

There is some room for improvement, namely not all the available bytes are used typically, but I feel Im at the point of significantly diminishing returns for downsampling + lossless compression.

Anyways, heres the current code:pastebin link

The largest image that fits in the 112 bytes is then used as the final image, with the remaining two bytes used to store the width & height of the compressed image, plus a flag indicating if the image is in color. For decoding, the process is reversed, and the image scaled up so the smaller dimension is 128.

The centroid of each region is located (to the nearest raster point on a grid containing no more than 402 points), as well as its average color (from a 216 color palette), and each of these regions is encoded as a number from0to86832, capable of being stored in2.5printable ascii characters (actually2.497, leaving just enough room to encode for a greyscale bit).

If an image is worth 1000 words, how much of an image can you fit in 114.97 bytes?

2D Shapes(21×15 luminance, 7×5 chroma)

More specifically, each of the additional14regions is converted to a number, and then each of these numbers concatenated together (multiplying the current value by86832, and adding the next). This (gigantic) number is then converted to a permutation on56objects.

0Jc?NsbD1WDuqT]AJFELu!iE3d!BBjOALj!lCWXkr:gCXuD=D\BLgA\ 8*RKQ*tv\\3V0j;_4o7Xage-N85):Q/Hl4.t&0pp)dRy+?xrA6u&2E!Ls]i]T~)58%RiA

are off-topic, as they make it impossible to indisputably decide which entry should win. Dennis

Ability to do a reasonable job of compressing a wide variety of images, including those not listed as a sample image

I feel like I would want to patent a solution to this.

This is a method based on superpixel interpolation. To begin, each image is divided into70similar sized regions of similar color. For example, the landscape picture is divided in the following manner:

OK, took me a while, but here it is. All images in greyscale. Colors took too many bits to encode for my method 😛

The decoding process cannot access or contain the original images in any way.

I bring it down to a modified version of the RISC-OS palette – basically, because I needed a 32 colour palette, and that seemed like a good enough place to start. This could do with some changing too I think.

When the–greyscaleoption is used with the encoder,94regions are used instead (separated70,24), with558raster points, and16shades of grey.

This question appears to be off-topic. The users who voted to close gave this specific reason:

Added an encoder option to control the segmentation ratio, and a decoder option to turn off blending.

I break it down into the following shapes:

Based on the very successfulTwitter image encoding challengeat Stack Overflow.

Thank you, primo, I truly appreciate that. I always admire your work, so hearing you say that is quite flattering!

I challenge you to come up with a general-purpose method to compress images into a standard Twitter comment that containsonly printable ASCII text.

from __future__ import division import argparse from PIL import Image, ImageDraw, ImageChops, ImageFilter from my_geom import * def decode(instr, no_blending=False): innum = 0 for c in instr: innum *= 95 innum += ord(c) – 32 greyscale = innum%2 innum //= 2 if greyscale: max_num = 8928 set1_len = 70 image_mode = L default_color = 0 raster_ratio = 11 else: max_num = 86832 set1_len = 56 image_mode = RGB default_color = (0, 0, 0) raster_ratio = 13 nums = [] for i in range(set1_len): nums = [innum%max_num] + nums innum //= max_num set2_num = perm2num(nums) set2_len = set2_num%25 set2_num //= 25 raster_height = set2_num%85 set2_num //= 85 raster_width = set2_num%85 set2_num //= 85 resize_width = raster_width*raster_ratio resize_height = raster_height*raster_ratio for i in range(set2_len): nums += set2_num%max_num, set2_num //= max_num regions = [] for num in nums: r = Region() r.from_num(num, raster_width, greyscale) regions += r, masks = [] outimage = Image.new(image_mode, (resize_width, resize_height), default_color) for a in regions: mask = Image.new(L, (resize_width, resize_height), 255) for b in regions: if a==b: continue submask = Image.new(L, (resize_width, resize_height), 0) poly = a.centroid.bisected_poly(b.centroid, resize_width, resize_height) ImageDraw.Draw(submask).polygon(poly, fill=255, outline=255) mask = ImageChops.multiply(mask, submask) outimage.paste(a.avg_color, mask=mask) if not no_blending: outimage = outimage.resize((raster_width, raster_height), Image.ANTIALIAS) outimage = outimage.resize((resize_width, resize_height), Image.BICUBIC) smooth = ImageFilter.Kernel((3,3),(1,2,1,2,4,2,1,2,1)) for i in range(20):outimage = outimage.filter(smooth) outimage.show() parser = argparse.ArgumentParser(description=Decodes a tweet into and image.) parser.add_argument(–no-blending, dest=no_blending, action=store_true, help=Do not blend the borders in the final image.) args = parser.parse_args() instr = raw_input() decode(instr, args.no_blending)

Ill give it some more work later to try to fix those, and improved

Works by dividing the image into regions recursively. I try to divide regions with high information content, and pick the dividing line to maximize the difference in color between the two regions.

[0, 3, 33, 13, 26, 22, 54, 12, 53, 47, 8, 39, 19, 51, 18, 27, 1, 41, 50, 20, 5, 29, 46, 9, 42, 23, 4, 37, 21, 49, 2, 6, 55, 52, 36, 7, 43, 11, 30, 10, 34, 44, 24, 45, 32, 28, 17, 35, 15, 25, 48, 40, 38, 31, 16, 14] 9

This is a somewhat subjective challenge, so the winner will (eventually) be judged by me. I will focus my judgment on a couple of important factors, listed below in decreasing importance:

The color version of the Mona Lisa looks like one of her boobs popped. Jesting aside, this is incredible.

Each division is encoded using a few bits to encode the dividing line. Each leaf region is encoded as a single color.

My first attempt. This has room for improvement. I think that the format itself actually works, the issue is in the encoder. That, and Im missing individual bits from my output… my (slightly higher quality then here) file ended up at 144 characters, when there should have been some left. (and I really wish there was – the differences between these and those are noticeable). I learnt though, never overestimate how big 140 characters is…

I encode my bitstream with a type of base64 encoding. Before its encoded into readable text, heres what happens.

Sign uporlog into customize your list.

from __future__ import division import argparse, numpy from skimage.io import imread from skimage.transform import resize from skimage.segmentation import slic from asure import regionprops from my_geom import * def encode(filename, seg_ratio, greyscale): img = imread(filename) height = len(img) width = len(img[0]) ratio = width/height if greyscale: raster_size = 558 raster_ratio = 11 num_segs = 94 set1_len = 70 max_num = 8928 558 * 16 else: raster_size = 402 raster_ratio = 13 num_segs = 70 set1_len = 56 max_num = 86832 402 * 216 raster_width = (raster_size*ratio)**0.5 raster_height = int(raster_width/ratio) raster_width = int(raster_width) resize_height = raster_height * raster_ratio resize_width = raster_width * raster_ratio img = resize(img, (resize_height, resize_width)) segs = slic(img, n_segments=num_segs-4, ratio=seg_ratio).astype(int16) max_label = segs.max() numpy.place(segs, segs==0, [max_label+1]) regions = [None]*(max_label+2) for props in regionprops(segs): label = props[Label] props[Greyscale] = greyscale regions[label] = Region(props) for i, a in enumerate(regions): for j, b in enumerate(regions): if a==None or b==None or a==b: continue if a.centroid == b.centroid: numpy.place(segs, segs==j, [i]) regions[j] = None for y in range(resize_height): for x in range(resize_width): label = segs[y][x] regions[label].add_point(img[y][x]) regions = [r for r in regions if r != None] if len(regions)num_segs: regions = sorted(regions, key=lambda r: r.area)[-num_segs:] regions = sorted(regions, key=lambda r: r.to_num(raster_width)) set1, set2 = regions[-set1_len:], regions[:-set1_len] set2_num = 0 for s in set2: set2_num *= max_num set2_num += s.to_num(raster_width) set2_num = ((set2_num*85 + raster_width)*85 + raster_height)*25 + len(set2) perm = num2perm(set2_num, set1_len) set1 = permute(set1, perm) outnum = 0 for r in set1: outnum *= max_num outnum += r.to_num(raster_width) outnum *= 2 outnum += greyscale outstr = for i in range(140): outstr = chr(32 + outnum%95) + outstr outnum //= 95 print outstr parser = argparse.ArgumentParser(description=Encodes an image into a tweetable format.) parser.add_argument(filename, type=str, help=The filename of the image to encode.) parser.add_argument(–ratio, dest=seg_ratio, type=float, default=30, help=The segmentation ratio. Higher values (50+) will result in more regular shapes, lower values in more regular region color.) parser.add_argument(–greyscale, dest=greyscale, action=store_true, help=Encode the image as greyscale.) args = parser.parse_args() encode(args.filename, args.seg_ratio, args.greyscale)

4PV 9G7XpC[Czd!5&rA5 Eo1Q\+m5t:r;H65NIggfkwh4*gs.:~btVuVL7V8Ed5`ft7eHMHrVVUXc.7APBm,i1B781.K8s(yUV?a*!twZ.wuFnN dp

Compression time. Although not as important as how well an image is compressed, faster programs are better than slower programs that do the same thing.

Your program must accept images in at least one of these formats (not necessarily more): Bitmap, JPEG, GIF, TIFF, PNG. If some or all of the sample images are not in the correct format, you can convert them yourself prior to compression by your program.

. Id need to come up with a clever way to uniquely number all partitions of

Binary trees work like this: Going from bit to bit,0means go left,1means go right. Keep going until you hit a leaf on the tree, or a dead end. The leaf you end up on is the character you want.

Ability to preserve outlines and colors of the minor details in an image

Mountainous Landscapes 1024×768 – Get it before its gone! /VaCzpRL.jpg–

0VW*`Gnyq;c1JBYtjrOcKm)v_Ac\S.r[,Xd_(qT6 ]!xOfU9~0jmIMGhcg-*a.sX]6*%U5/FOze?9\iA7P ]a-7eC&ttS[]KNwN-^$T1E.1OH^c0^J 4V9X

Mountains(19×14 luminance, 6×4 chroma)

Encoding requiresnumpySciPyandscikit-image.

Ability to preserve the outlines of the major elements in an image

Ability to compress the colors of the major elements in an image